AI evaluation platform - AI tools

-

Freeplay The All-in-One Platform for AI Experimentation, Evaluation, and Observability

Freeplay The All-in-One Platform for AI Experimentation, Evaluation, and ObservabilityFreeplay provides comprehensive tools for AI teams to run experiments, evaluate model performance, and monitor production, streamlining the development process.

- Paid

- From 500$

-

Arize Unified Observability and Evaluation Platform for AI

Arize Unified Observability and Evaluation Platform for AIArize is a comprehensive platform designed to accelerate the development and improve the production of AI applications and agents.

- Freemium

- From 50$

-

Future AGI World’s first comprehensive evaluation and optimization platform to help enterprises achieve 99% accuracy in AI applications across software and hardware.

Future AGI World’s first comprehensive evaluation and optimization platform to help enterprises achieve 99% accuracy in AI applications across software and hardware.Future AGI is a comprehensive evaluation and optimization platform designed to help enterprises build, evaluate, and improve AI applications, aiming for high accuracy across software and hardware.

- Freemium

- From 50$

-

Evidently AI Collaborative AI observability platform for evaluating, testing, and monitoring AI-powered products

Evidently AI Collaborative AI observability platform for evaluating, testing, and monitoring AI-powered productsEvidently AI is a comprehensive AI observability platform that helps teams evaluate, test, and monitor LLM and ML models in production, offering data drift detection, quality assessment, and performance monitoring capabilities.

- Freemium

- From 50$

-

Lisapet.ai AI Prompt testing suite for product teams

Lisapet.ai AI Prompt testing suite for product teamsLisapet.ai is an AI development platform designed to help product teams prototype, test, and deploy AI features efficiently by automating prompt testing.

- Paid

- From 9$

-

Coherence AI-Augmented Testing and Deployment Platform

Coherence AI-Augmented Testing and Deployment PlatformCoherence provides AI-augmented testing for evaluating AI responses and prompts, alongside a platform for streamlined cloud deployment and infrastructure management.

- Freemium

- From 35$

-

Braintrust The end-to-end platform for building world-class AI apps.

Braintrust The end-to-end platform for building world-class AI apps.Braintrust provides an end-to-end platform for developing, evaluating, and monitoring Large Language Model (LLM) applications. It helps teams build robust AI products through iterative workflows and real-time analysis.

- Freemium

- From 249$

-

Gentrace Intuitive evals for intelligent applications

Gentrace Intuitive evals for intelligent applicationsGentrace is an LLM evaluation platform designed for AI teams to test and automate evaluations of generative AI products and agents. It facilitates collaborative development and ensures high-quality LLM applications.

- Usage Based

-

Humanloop The LLM evals platform for enterprises to ship and scale AI with confidence

Humanloop The LLM evals platform for enterprises to ship and scale AI with confidenceHumanloop is an enterprise-grade platform that provides tools for LLM evaluation, prompt management, and AI observability, enabling teams to develop, evaluate, and deploy trustworthy AI applications.

- Freemium

-

HoneyHive AI Observability and Evaluation Platform for Building Reliable AI Products

HoneyHive AI Observability and Evaluation Platform for Building Reliable AI ProductsHoneyHive is a comprehensive platform that provides AI observability, evaluation, and prompt management tools to help teams build and monitor reliable AI applications.

- Freemium

-

Weco The AI Research Engineer Turning Benchmarks into Breakthroughs

Weco The AI Research Engineer Turning Benchmarks into BreakthroughsWeco utilizes an AI research engineer, AIDE, to automate code optimization and research through benchmark-driven experimentation, delivering measurable performance improvements.

- Contact for Pricing

-

Benchx Customize and streamline your agent evaluations

Benchx Customize and streamline your agent evaluationsBenchx offers a platform to create custom evaluation datasets and run AI agent tests in managed sandboxed environments, providing deep performance insights.

- Contact for Pricing

-

Oumi The Open Platform for Building, Evaluating, and Deploying AI Models

Oumi The Open Platform for Building, Evaluating, and Deploying AI ModelsOumi provides an open, collaborative platform for researchers and developers to build, evaluate, and deploy state-of-the-art AI models, from data preparation to production.

- Contact for Pricing

-

ech0 Hybrid Human-AI Testing for Safer AI Deployments

ech0 Hybrid Human-AI Testing for Safer AI Deploymentsech0 provides comprehensive, scalable testing for AI agents, identifying security vulnerabilities, consistency issues, and policy compliance before production deployment.

- Freemium

-

LastMile AI Ship generative AI apps to production with confidence.

LastMile AI Ship generative AI apps to production with confidence.LastMile AI empowers developers to seamlessly transition generative AI applications from prototype to production with a robust developer platform.

- Contact for Pricing

- API

-

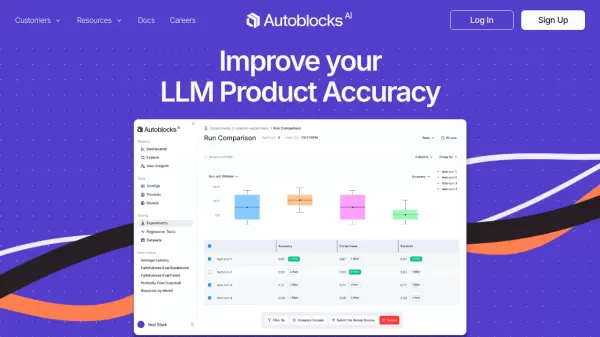

Autoblocks Improve your LLM Product Accuracy with Expert-Driven Testing & Evaluation

Autoblocks Improve your LLM Product Accuracy with Expert-Driven Testing & EvaluationAutoblocks is a collaborative testing and evaluation platform for LLM-based products that automatically improves through user and expert feedback, offering comprehensive tools for monitoring, debugging, and quality assurance.

- Freemium

- From 1750$

-

Hegel AI Developer Platform for Large Language Model (LLM) Applications

Hegel AI Developer Platform for Large Language Model (LLM) ApplicationsHegel AI provides a developer platform for building, monitoring, and improving large language model (LLM) applications, featuring tools for experimentation, evaluation, and feedback integration.

- Contact for Pricing

-

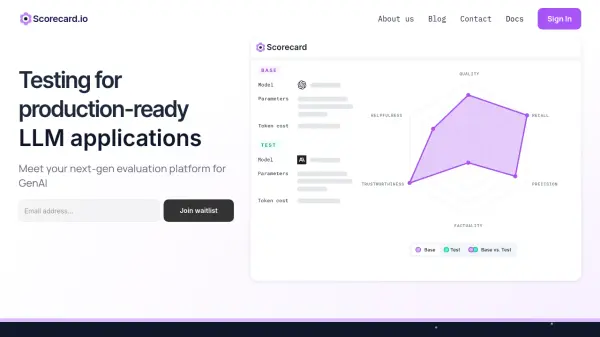

Scorecard.io Testing for production-ready LLM applications, RAG systems, Agents, Chatbots.

Scorecard.io Testing for production-ready LLM applications, RAG systems, Agents, Chatbots.Scorecard.io is an evaluation platform designed for testing and validating production-ready Generative AI applications, including LLMs, RAG systems, agents, and chatbots. It supports the entire AI production lifecycle from experiment design to continuous evaluation.

- Contact for Pricing

-

Langtrace Transform AI Prototypes into Enterprise-Grade Products

Langtrace Transform AI Prototypes into Enterprise-Grade ProductsLangtrace is an open-source observability and evaluations platform designed to help developers monitor, evaluate, and enhance AI agents for enterprise deployment.

- Freemium

- From 31$

-

Maxim Simulate, evaluate, and observe your AI agents

Maxim Simulate, evaluate, and observe your AI agentsMaxim is an end-to-end evaluation and observability platform designed to help teams ship AI agents reliably and more than 5x faster.

- Paid

- From 29$

-

Distributional The Modern Enterprise Platform for AI Testing

Distributional The Modern Enterprise Platform for AI TestingDistributional is an enterprise platform for AI testing, designed to give teams confidence in the reliability of their AI and ML applications. It offers a proactive approach to mitigate the risks associated with unpredictable AI systems.

- Contact for Pricing

-

Adaline Ship reliable AI faster

Adaline Ship reliable AI fasterAdaline is a collaborative platform for teams building with Large Language Models (LLMs), enabling efficient iteration, evaluation, deployment, and monitoring of prompts.

- Contact for Pricing

-

aixblock.io Productize AI using Decentralized Resources with Flexibility and Full Privacy Control

aixblock.io Productize AI using Decentralized Resources with Flexibility and Full Privacy ControlAIxBlock is a decentralized platform for AI development and deployment, offering access to computing power, AI models, and human validators. It ensures privacy, scalability, and cost savings through its decentralized infrastructure.

- Freemium

- From 69$

-

Basalt Integrate AI in your product in seconds

Basalt Integrate AI in your product in secondsBasalt is an AI building platform that helps teams quickly create, test, and launch reliable AI features. It offers tools for prototyping, evaluating, and deploying AI prompts.

- Freemium

-

teammately.ai The AI Agent for AI Engineers that autonomously builds AI Products, Models and Agents

teammately.ai The AI Agent for AI Engineers that autonomously builds AI Products, Models and AgentsTeammately is an autonomous AI agent that self-iterates AI products, models, and agents to meet specific objectives, operating beyond human-only capabilities through scientific methodology and comprehensive testing.

- Freemium

-

TradingPlatforms.ai Your Guide to AI Trading Platforms, Bots, and Tools Reviews

TradingPlatforms.ai Your Guide to AI Trading Platforms, Bots, and Tools ReviewsTradingPlatforms.ai is a comprehensive review platform that provides detailed analysis and evaluations of AI trading platforms, bots, and tools to support traders and investors in making informed decisions.

- Free

-

GenesisAI Global AI API Marketplace: Discover, Test, and Connect

GenesisAI Global AI API Marketplace: Discover, Test, and ConnectGenesisAI is a global marketplace enabling users to discover, compare, test, and integrate state-of-the-art AI APIs for various applications.

- Usage Based

-

Okareo Error Discovery and Evaluation for AI Agents

Okareo Error Discovery and Evaluation for AI AgentsOkareo provides error discovery and evaluation tools for AI agents, enabling faster iteration, increased accuracy, and optimized performance through advanced monitoring and fine-tuning.

- Freemium

- From 199$

-

Midscene.js Joyful Automation by AI for Web, Android, Automation & Testing

Midscene.js Joyful Automation by AI for Web, Android, Automation & TestingMidscene.js is an AI-powered operator designed for web and Android automation and testing. It enables users to interact, query, and assert using natural language commands, simplifying script creation and maintenance.

- Free

-

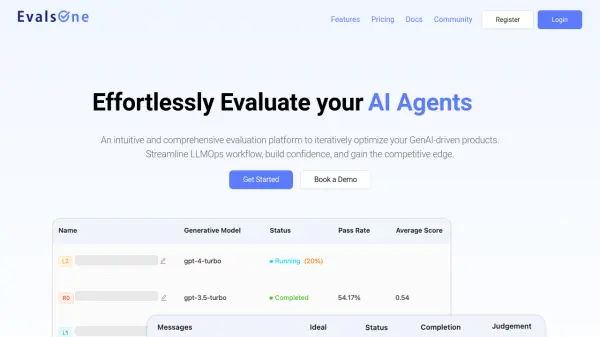

EvalsOne Evaluate LLMs & RAG Pipelines Quickly

EvalsOne Evaluate LLMs & RAG Pipelines QuicklyEvalsOne is a platform for rapidly evaluating Large Language Models (LLMs) and Retrieval-Augmented Generation (RAG) pipelines using various metrics.

- Freemium

- From 19$

Featured Tools

Join Our Newsletter

Stay updated with the latest AI tools, news, and offers by subscribing to our weekly newsletter.

Explore More

-

marketing link tracking tool 60 tools

-

Slack conversation summarization tool 19 tools

-

AI video retouching tool 60 tools

-

AI nutrition recommendation engine 41 tools

-

AI product description generator for ecommerce 32 tools

-

Create lifestyle product images with AI 25 tools

-

generative AI resource platform 13 tools

-

free image editing tools 27 tools

-

AI tool to convert papers to audio 22 tools

Didn't find tool you were looking for?