EvalsOne - Alternatives & Competitors

Evaluate LLMs & RAG Pipelines Quickly

EvalsOne is a platform for rapidly evaluating Large Language Models (LLMs) and Retrieval-Augmented Generation (RAG) pipelines using various metrics.

Ranked by Relevance

-

1

BenchLLM The best way to evaluate LLM-powered apps

BenchLLM The best way to evaluate LLM-powered appsBenchLLM is a tool for evaluating LLM-powered applications. It allows users to build test suites, generate quality reports, and choose between automated, interactive, or custom evaluation strategies.

- Other

-

2

Reva Use the right LLM for your task

Reva Use the right LLM for your taskReva helps businesses test AI configurations and compare LLM outcomes to ensure optimal performance for their specific tasks, focusing on outcome-driven AI testing and model evaluation.

- Contact for Pricing

-

3

Compare AI Models AI Model Comparison Tool

Compare AI Models AI Model Comparison ToolCompare AI Models is a platform providing comprehensive comparisons and insights into various large language models, including GPT-4o, Claude, Llama, and Mistral.

- Freemium

-

4

Gentrace Intuitive evals for intelligent applications

Gentrace Intuitive evals for intelligent applicationsGentrace is an LLM evaluation platform designed for AI teams to test and automate evaluations of generative AI products and agents. It facilitates collaborative development and ensures high-quality LLM applications.

- Usage Based

-

5

LastMile AI Ship generative AI apps to production with confidence.

LastMile AI Ship generative AI apps to production with confidence.LastMile AI empowers developers to seamlessly transition generative AI applications from prototype to production with a robust developer platform.

- Contact for Pricing

- API

-

6

OneLLM Fine-tune, evaluate, and deploy your next LLM without code.

OneLLM Fine-tune, evaluate, and deploy your next LLM without code.OneLLM is a no-code platform enabling users to fine-tune, evaluate, and deploy Large Language Models (LLMs) efficiently. Streamline LLM development by creating datasets, integrating API keys, running fine-tuning processes, and comparing model performance.

- Freemium

- From 19$

-

7

LangWatch Monitor, Evaluate & Optimize your LLM performance with 1-click

LangWatch Monitor, Evaluate & Optimize your LLM performance with 1-clickLangWatch empowers AI teams to ship 10x faster with quality assurance at every step. It provides tools to measure, maximize, and easily collaborate on LLM performance.

- Paid

- From 59$

-

8

LLM Pricing A comprehensive pricing comparison tool for Large Language Models

LLM Pricing A comprehensive pricing comparison tool for Large Language ModelsLLM Pricing is a website that aggregates and compares pricing information for various Large Language Models (LLMs) from official AI providers and cloud service vendors.

- Free

-

9

ModelBench No-Code LLM Evaluations

ModelBench No-Code LLM EvaluationsModelBench enables teams to rapidly deploy AI solutions with no-code LLM evaluations. It allows users to compare over 180 models, design and benchmark prompts, and trace LLM runs, accelerating AI development.

- Free Trial

- From 49$

-

10

Conviction The Platform to Evaluate & Test LLMs

Conviction The Platform to Evaluate & Test LLMsConviction is an AI platform designed for evaluating, testing, and monitoring Large Language Models (LLMs) to help developers build reliable AI applications faster. It focuses on detecting hallucinations, optimizing prompts, and ensuring security.

- Freemium

- From 249$

-

11

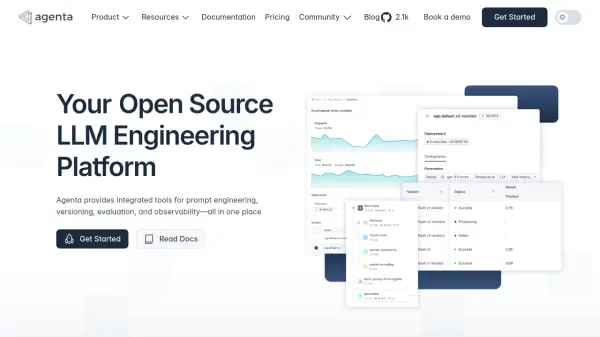

Agenta End-to-End LLM Engineering Platform

Agenta End-to-End LLM Engineering PlatformAgenta is an LLM engineering platform offering tools for prompt engineering, versioning, evaluation, and observability in a single, collaborative environment.

- Freemium

- From 49$

-

12

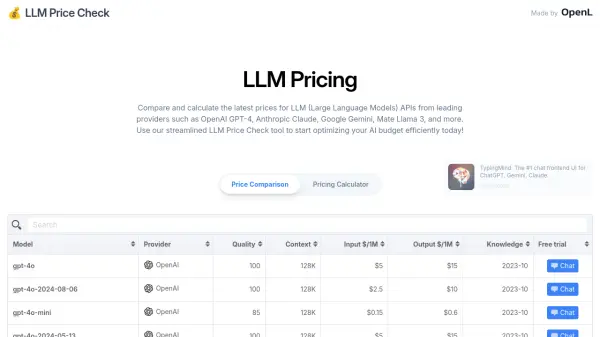

LLM Price Check Compare LLM Prices Instantly

LLM Price Check Compare LLM Prices InstantlyLLM Price Check allows users to compare and calculate prices for Large Language Model (LLM) APIs from providers like OpenAI, Anthropic, Google, and more. Optimize your AI budget efficiently.

- Free

-

13

Hegel AI Developer Platform for Large Language Model (LLM) Applications

Hegel AI Developer Platform for Large Language Model (LLM) ApplicationsHegel AI provides a developer platform for building, monitoring, and improving large language model (LLM) applications, featuring tools for experimentation, evaluation, and feedback integration.

- Contact for Pricing

-

14

Humanloop The LLM evals platform for enterprises to ship and scale AI with confidence

Humanloop The LLM evals platform for enterprises to ship and scale AI with confidenceHumanloop is an enterprise-grade platform that provides tools for LLM evaluation, prompt management, and AI observability, enabling teams to develop, evaluate, and deploy trustworthy AI applications.

- Freemium

-

15

TheFastest.ai Reliable performance measurements for popular LLM models.

TheFastest.ai Reliable performance measurements for popular LLM models.TheFastest.ai provides reliable, daily updated performance benchmarks for popular Large Language Models (LLMs), measuring Time To First Token (TTFT) and Tokens Per Second (TPS) across different regions and prompt types.

- Free

-

16

LLM Explorer Discover and Compare Open-Source Language Models

LLM Explorer Discover and Compare Open-Source Language ModelsLLM Explorer is a comprehensive platform for discovering, comparing, and accessing over 46,000 open-source Large Language Models (LLMs) and Small Language Models (SLMs).

- Free

-

17

neutrino AI Multi-model AI Infrastructure for Optimal LLM Performance

neutrino AI Multi-model AI Infrastructure for Optimal LLM PerformanceNeutrino AI provides multi-model AI infrastructure to optimize Large Language Model (LLM) performance for applications. It offers tools for evaluation, intelligent routing, and observability to enhance quality, manage costs, and ensure scalability.

- Usage Based

-

18

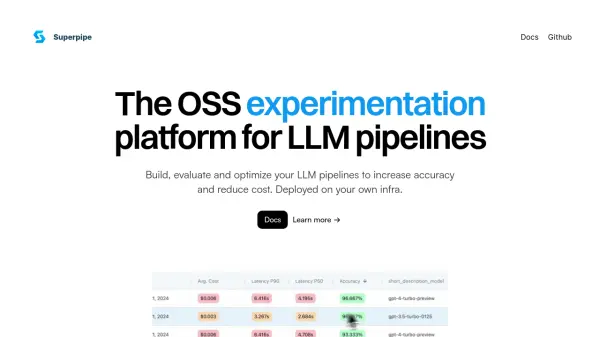

Superpipe The OSS experimentation platform for LLM pipelines

Superpipe The OSS experimentation platform for LLM pipelinesSuperpipe is an open-source experimentation platform designed for building, evaluating, and optimizing Large Language Model (LLM) pipelines to improve accuracy and minimize costs. It allows deployment on user infrastructure for enhanced privacy and security.

- Free

-

19

Langbase The most powerful serverless platform for building AI products

Langbase The most powerful serverless platform for building AI productsLangbase is a serverless AI developer platform that enables developers to build, deploy, and manage AI products with composable infrastructure, featuring BaseAI - the first Web AI Framework.

- Freemium

- From 20$

-

20

OverallGPT Compare AI Models Side-by-Side

OverallGPT Compare AI Models Side-by-SideOverallGPT is a platform that allows users to compare responses from different AI models, enabling informed decisions for selecting the most accurate and relevant AI solutions.

- Free

-

21

MIOSN Stop overthinking LLMs. Find the optimal model at the lowest cost.

MIOSN Stop overthinking LLMs. Find the optimal model at the lowest cost.MIOSN helps users find the most suitable and cost-effective Large Language Model (LLM) for their specific tasks by analyzing and comparing different models.

- Free

-

22

Libretto LLM Monitoring, Testing, and Optimization

Libretto LLM Monitoring, Testing, and OptimizationLibretto offers comprehensive LLM monitoring, automated prompt testing, and optimization tools to ensure the reliability and performance of your AI applications.

- Freemium

- From 180$

-

23

Intura Compare, Choose, and Save on AI & LLMs

Intura Compare, Choose, and Save on AI & LLMsIntura helps businesses experiment with, compare, and deploy AI and LLM models side-by-side to optimize performance and cost before full-scale implementation.

- Freemium

-

24

Adaline Ship reliable AI faster

Adaline Ship reliable AI fasterAdaline is a collaborative platform for teams building with Large Language Models (LLMs), enabling efficient iteration, evaluation, deployment, and monitoring of prompts.

- Contact for Pricing

-

25

LangDB The Fastest Enterprise AI Gateway for Secure, Governed, and Optimized AI Traffic.

LangDB The Fastest Enterprise AI Gateway for Secure, Governed, and Optimized AI Traffic.LangDB is an enterprise AI gateway designed to secure, govern, and optimize AI traffic across over 250 LLMs via a unified API. It helps reduce costs and enhance performance for AI workflows.

- Freemium

- From 49$

-

26

Langtail The low-code platform for testing AI apps

Langtail The low-code platform for testing AI appsLangtail is a comprehensive testing platform that enables teams to test and debug LLM-powered applications with a spreadsheet-like interface, offering security features and integration with major LLM providers.

- Freemium

- From 99$

-

27

Scorecard.io Testing for production-ready LLM applications, RAG systems, Agents, Chatbots.

Scorecard.io Testing for production-ready LLM applications, RAG systems, Agents, Chatbots.Scorecard.io is an evaluation platform designed for testing and validating production-ready Generative AI applications, including LLMs, RAG systems, agents, and chatbots. It supports the entire AI production lifecycle from experiment design to continuous evaluation.

- Contact for Pricing

-

28

PromptsLabs A Library of Prompts for Testing LLMs

PromptsLabs A Library of Prompts for Testing LLMsPromptsLabs is a community-driven platform providing copy-paste prompts to test the performance of new LLMs. Explore and contribute to a growing collection of prompts.

- Free

-

29

Unify Build AI Your Way

Unify Build AI Your WayUnify provides tools to build, test, and optimize LLM pipelines with custom interfaces and a unified API for accessing all models across providers.

- Freemium

- From 40$

-

30

RAGBuilder Build Optimized RAG Systems in Minutes, Not Months.

RAGBuilder Build Optimized RAG Systems in Minutes, Not Months.RAGBuilder is a platform designed to simplify the creation, optimization, and deployment of Retrieval-Augmented Generation (RAG) systems, significantly reducing development time and costs without requiring deep AI expertise.

- Contact for Pricing

-

31

Future AGI World’s first comprehensive evaluation and optimization platform to help enterprises achieve 99% accuracy in AI applications across software and hardware.

Future AGI World’s first comprehensive evaluation and optimization platform to help enterprises achieve 99% accuracy in AI applications across software and hardware.Future AGI is a comprehensive evaluation and optimization platform designed to help enterprises build, evaluate, and improve AI applications, aiming for high accuracy across software and hardware.

- Freemium

- From 50$

-

32

Braintrust The end-to-end platform for building world-class AI apps.

Braintrust The end-to-end platform for building world-class AI apps.Braintrust provides an end-to-end platform for developing, evaluating, and monitoring Large Language Model (LLM) applications. It helps teams build robust AI products through iterative workflows and real-time analysis.

- Freemium

- From 249$

-

33

VESSL AI Operationalize Full Spectrum AI & LLMs

VESSL AI Operationalize Full Spectrum AI & LLMsVESSL AI provides a full-stack cloud infrastructure for AI, enabling users to train, deploy, and manage AI models and workflows with ease and efficiency.

- Usage Based

-

34

LLM Optimize Rank Higher in AI Engines Recommendations

LLM Optimize Rank Higher in AI Engines RecommendationsLLM Optimize provides professional website audits to help you rank higher in LLMs like ChatGPT and Google's AI Overview, outranking competitors with tailored, actionable recommendations.

- Paid

-

35

Lega Large Language Model Governance

Lega Large Language Model GovernanceLega empowers law firms and enterprises to safely explore, assess, and implement generative AI technologies. It provides enterprise guardrails for secure LLM exploration and a toolset to capture and scale critical learnings.

- Contact for Pricing

-

36

Autoblocks Improve your LLM Product Accuracy with Expert-Driven Testing & Evaluation

Autoblocks Improve your LLM Product Accuracy with Expert-Driven Testing & EvaluationAutoblocks is a collaborative testing and evaluation platform for LLM-based products that automatically improves through user and expert feedback, offering comprehensive tools for monitoring, debugging, and quality assurance.

- Freemium

- From 1750$

-

37

Requesty Develop, Deploy, and Monitor AI with Confidence

Requesty Develop, Deploy, and Monitor AI with ConfidenceRequesty is a platform for faster AI development, deployment, and monitoring. It provides tools for refining LLM applications, analyzing conversational data, and extracting actionable insights.

- Usage Based

-

38

Prompt Hippo Test and Optimize LLM Prompts with Science.

Prompt Hippo Test and Optimize LLM Prompts with Science.Prompt Hippo is an AI-powered testing suite for Large Language Model (LLM) prompts, designed to improve their robustness, reliability, and safety through side-by-side comparisons.

- Freemium

- From 100$

-

39

Weavel Automate Prompt Engineering 50x Faster

Weavel Automate Prompt Engineering 50x FasterWeavel optimizes prompts for LLM applications, achieving significantly higher performance than manual methods. Streamline your workflow and enhance your AI's accuracy with just a few lines of code.

- Freemium

- From 250$

-

40

OpenRouter A unified interface for LLMs

OpenRouter A unified interface for LLMsOpenRouter provides a unified interface for accessing and comparing various Large Language Models (LLMs), offering users the ability to find optimal models and pricing for their specific prompts.

- Usage Based

-

41

Vectorize Build RAG Applications 10X Faster

Vectorize Build RAG Applications 10X FasterVectorize is a RAG-as-a-Service platform designed to accelerate AI application development by simplifying the process of connecting unstructured data to Large Language Models (LLMs). It automatically extracts and optimizes data from various sources for efficient vector search.

- Freemium

- From 99$

-

42

LangSearch Connect your LLM applications to the world.

LangSearch Connect your LLM applications to the world.LangSearch is a Web Search API that offers natural language search and semantic reranking, providing clean and accurate context for LLM applications.

- Free

-

43

Parea Test and Evaluate your AI systems

Parea Test and Evaluate your AI systemsParea is a platform for testing, evaluating, and monitoring Large Language Model (LLM) applications, helping teams track experiments, collect human feedback, and deploy prompts confidently.

- Freemium

- From 150$

-

44

MLflow ML and GenAI made simple

MLflow ML and GenAI made simpleMLflow is an open-source, end-to-end MLOps platform for building better models and generative AI apps. It simplifies complex ML and generative AI projects, offering comprehensive management from development to production.

- Free

-

45

AI Monitor Don’t Remain Blind in the Age of AI!

AI Monitor Don’t Remain Blind in the Age of AI!AI Monitor is a Generative Engine Optimization (GEO) platform helping brands track visibility and reputation across AI platforms like ChatGPT and Google AI Overviews.

- Contact for Pricing

-

46

Laminar The AI engineering platform for LLM products

Laminar The AI engineering platform for LLM productsLaminar is an open-source platform that enables developers to trace, evaluate, label, and analyze Large Language Model (LLM) applications with minimal code integration.

- Freemium

- From 25$

-

47

Open Source AI Gateway Manage multiple LLM providers with built-in failover, guardrails, caching, and monitoring.

Open Source AI Gateway Manage multiple LLM providers with built-in failover, guardrails, caching, and monitoring.Open Source AI Gateway provides developers with a robust, production-ready solution to manage multiple LLM providers like OpenAI, Anthropic, and Gemini. It offers features like smart failover, caching, rate limiting, and monitoring for enhanced reliability and cost savings.

- Free

-

48

OpenLIT Open Source Platform for AI Engineering

OpenLIT Open Source Platform for AI EngineeringOpenLIT is an open-source observability platform designed to streamline AI development workflows, particularly for Generative AI and LLMs, offering features like prompt management, performance tracking, and secure secrets management.

- Other

-

49

Great Wave AI An operating system for agentic GenAI in government and regulated industries

Great Wave AI An operating system for agentic GenAI in government and regulated industriesGreat Wave AI provides a platform for building and managing GenAI agents, focused on quality, trust, usability, control, and security & compliance for government and regulated industries.

- Contact for Pricing

-

50

FriendliAI Accelerate Generative AI Inference

FriendliAI Accelerate Generative AI InferenceFriendliAI provides a high-performance platform for accelerating generative AI inference, enabling fast, cost-effective, and reliable deployment and serving of Large Language Models (LLMs).

- Usage Based

Featured Tools

Join Our Newsletter

Stay updated with the latest AI tools, news, and offers by subscribing to our weekly newsletter.

Didn't find tool you were looking for?