LLM performance analytics - AI tools

-

BenchLLM The best way to evaluate LLM-powered apps

BenchLLM The best way to evaluate LLM-powered appsBenchLLM is a tool for evaluating LLM-powered applications. It allows users to build test suites, generate quality reports, and choose between automated, interactive, or custom evaluation strategies.

- Other

-

neutrino AI Multi-model AI Infrastructure for Optimal LLM Performance

neutrino AI Multi-model AI Infrastructure for Optimal LLM PerformanceNeutrino AI provides multi-model AI infrastructure to optimize Large Language Model (LLM) performance for applications. It offers tools for evaluation, intelligent routing, and observability to enhance quality, manage costs, and ensure scalability.

- Usage Based

-

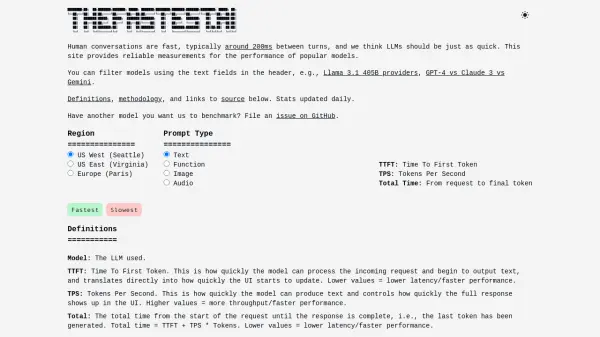

TheFastest.ai Reliable performance measurements for popular LLM models.

TheFastest.ai Reliable performance measurements for popular LLM models.TheFastest.ai provides reliable, daily updated performance benchmarks for popular Large Language Models (LLMs), measuring Time To First Token (TTFT) and Tokens Per Second (TPS) across different regions and prompt types.

- Free

-

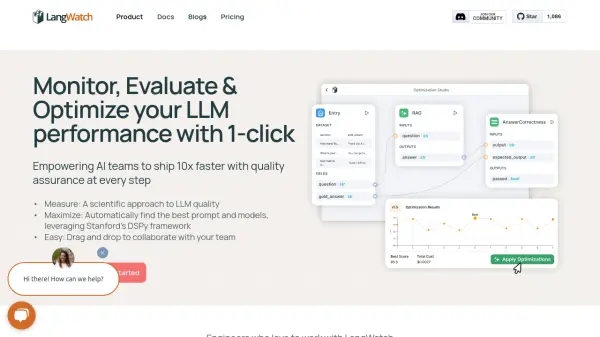

LangWatch Monitor, Evaluate & Optimize your LLM performance with 1-click

LangWatch Monitor, Evaluate & Optimize your LLM performance with 1-clickLangWatch empowers AI teams to ship 10x faster with quality assurance at every step. It provides tools to measure, maximize, and easily collaborate on LLM performance.

- Paid

- From 59$

-

LLM Optimize Rank Higher in AI Engines Recommendations

LLM Optimize Rank Higher in AI Engines RecommendationsLLM Optimize provides professional website audits to help you rank higher in LLMs like ChatGPT and Google's AI Overview, outranking competitors with tailored, actionable recommendations.

- Paid

-

Libretto LLM Monitoring, Testing, and Optimization

Libretto LLM Monitoring, Testing, and OptimizationLibretto offers comprehensive LLM monitoring, automated prompt testing, and optimization tools to ensure the reliability and performance of your AI applications.

- Freemium

- From 180$

-

Conviction The Platform to Evaluate & Test LLMs

Conviction The Platform to Evaluate & Test LLMsConviction is an AI platform designed for evaluating, testing, and monitoring Large Language Models (LLMs) to help developers build reliable AI applications faster. It focuses on detecting hallucinations, optimizing prompts, and ensuring security.

- Freemium

- From 249$

-

phoenix.arize.com Open-source LLM tracing and evaluation

phoenix.arize.com Open-source LLM tracing and evaluationPhoenix accelerates AI development with powerful insights, allowing seamless evaluation, experimentation, and optimization of AI applications in real time.

- Freemium

-

Feedback Intelligence Analytics Tool for LLM-powered Products

Feedback Intelligence Analytics Tool for LLM-powered ProductsFeedback Intelligence is an analytics platform for LLM-powered products like chatbots and voice agents, converting user interactions into actionable insights to improve performance and align with user intent.

- Freemium

-

Laminar The AI engineering platform for LLM products

Laminar The AI engineering platform for LLM productsLaminar is an open-source platform that enables developers to trace, evaluate, label, and analyze Large Language Model (LLM) applications with minimal code integration.

- Freemium

- From 25$

-

LLMLingua Series Effectively Deliver Information to LLMs via Prompt Compression

LLMLingua Series Effectively Deliver Information to LLMs via Prompt CompressionLLMLingua Series offers prompt compression techniques to accelerate Large Language Model (LLM) inference, reduce costs, and enhance performance, especially in long context scenarios.

- Other

-

LLMO Metrics Track and boost your brand presence in AI responses

LLMO Metrics Track and boost your brand presence in AI responsesLLMO Metrics tracks and optimizes your brand's visibility across major AI models like ChatGPT, Gemini, and Copilot. Monitor competitor rankings and ensure accurate brand representation in AI-generated answers.

- Free Trial

- From 87$

-

Align The Analytics Engine For Your Gen-AI Product

Align The Analytics Engine For Your Gen-AI ProductAlign is an analytics solution that enables organizations to analyze and evaluate data from LLM-based conversational products, improving chatbot performance and user interactions.

- Contact for Pricing

-

Graphsignal Unlock Faster AI

Graphsignal Unlock Faster AIGraphsignal monitors, profiles, and accelerates hosted LLM inference and model APIs, providing full visibility and deep insights for AI optimization.

- Freemium

- From 375$

-

PromptsLabs A Library of Prompts for Testing LLMs

PromptsLabs A Library of Prompts for Testing LLMsPromptsLabs is a community-driven platform providing copy-paste prompts to test the performance of new LLMs. Explore and contribute to a growing collection of prompts.

- Free

-

Literal AI Ship reliable LLM Products

Literal AI Ship reliable LLM ProductsLiteral AI streamlines the development of LLM applications, offering tools for evaluation, prompt management, logging, monitoring, and more to build production-grade AI products.

- Freemium

-

LLMate Bring Marketing Data to Life So You Can Talk to It

LLMate Bring Marketing Data to Life So You Can Talk to ItLLMate is an AI-powered marketing analytics platform that consolidates data from multiple marketing sources and enables natural language interactions for deeper insights and automated reporting.

- Paid

- From 49$

-

LLMMM Monitor how LLMs perceive your brand

LLMMM Monitor how LLMs perceive your brandLLMMM helps brands track their presence in leading AI models like ChatGPT, Gemini, and Meta AI, providing real-time monitoring and brand safety insights.

- Free

-

EvalsOne Evaluate LLMs & RAG Pipelines Quickly

EvalsOne Evaluate LLMs & RAG Pipelines QuicklyEvalsOne is a platform for rapidly evaluating Large Language Models (LLMs) and Retrieval-Augmented Generation (RAG) pipelines using various metrics.

- Freemium

- From 19$

-

Gentrace Intuitive evals for intelligent applications

Gentrace Intuitive evals for intelligent applicationsGentrace is an LLM evaluation platform designed for AI teams to test and automate evaluations of generative AI products and agents. It facilitates collaborative development and ensures high-quality LLM applications.

- Usage Based

-

Parea Test and Evaluate your AI systems

Parea Test and Evaluate your AI systemsParea is a platform for testing, evaluating, and monitoring Large Language Model (LLM) applications, helping teams track experiments, collect human feedback, and deploy prompts confidently.

- Freemium

- From 150$

-

Keywords AI LLM monitoring for AI startups

Keywords AI LLM monitoring for AI startupsKeywords AI is a comprehensive developer platform for LLM applications, offering monitoring, debugging, and deployment tools. It serves as a Datadog-like solution specifically designed for LLM applications.

- Freemium

- From 7$

-

OpenRouter A unified interface for LLMs

OpenRouter A unified interface for LLMsOpenRouter provides a unified interface for accessing and comparing various Large Language Models (LLMs), offering users the ability to find optimal models and pricing for their specific prompts.

- Usage Based

-

Autoblocks Improve your LLM Product Accuracy with Expert-Driven Testing & Evaluation

Autoblocks Improve your LLM Product Accuracy with Expert-Driven Testing & EvaluationAutoblocks is a collaborative testing and evaluation platform for LLM-based products that automatically improves through user and expert feedback, offering comprehensive tools for monitoring, debugging, and quality assurance.

- Freemium

- From 1750$

Featured Tools

Join Our Newsletter

Stay updated with the latest AI tools, news, and offers by subscribing to our weekly newsletter.

Explore More

-

marketing link tracking tool 60 tools

-

Slack conversation summarization tool 19 tools

-

AI video retouching tool 60 tools

-

AI nutrition recommendation engine 41 tools

-

AI product description generator for ecommerce 32 tools

-

Create lifestyle product images with AI 25 tools

-

generative AI resource platform 13 tools

-

free image editing tools 27 tools

-

AI tool to convert papers to audio 22 tools

Didn't find tool you were looking for?